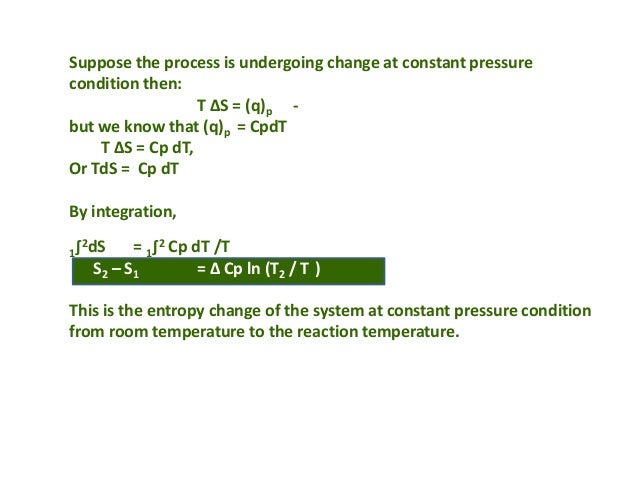

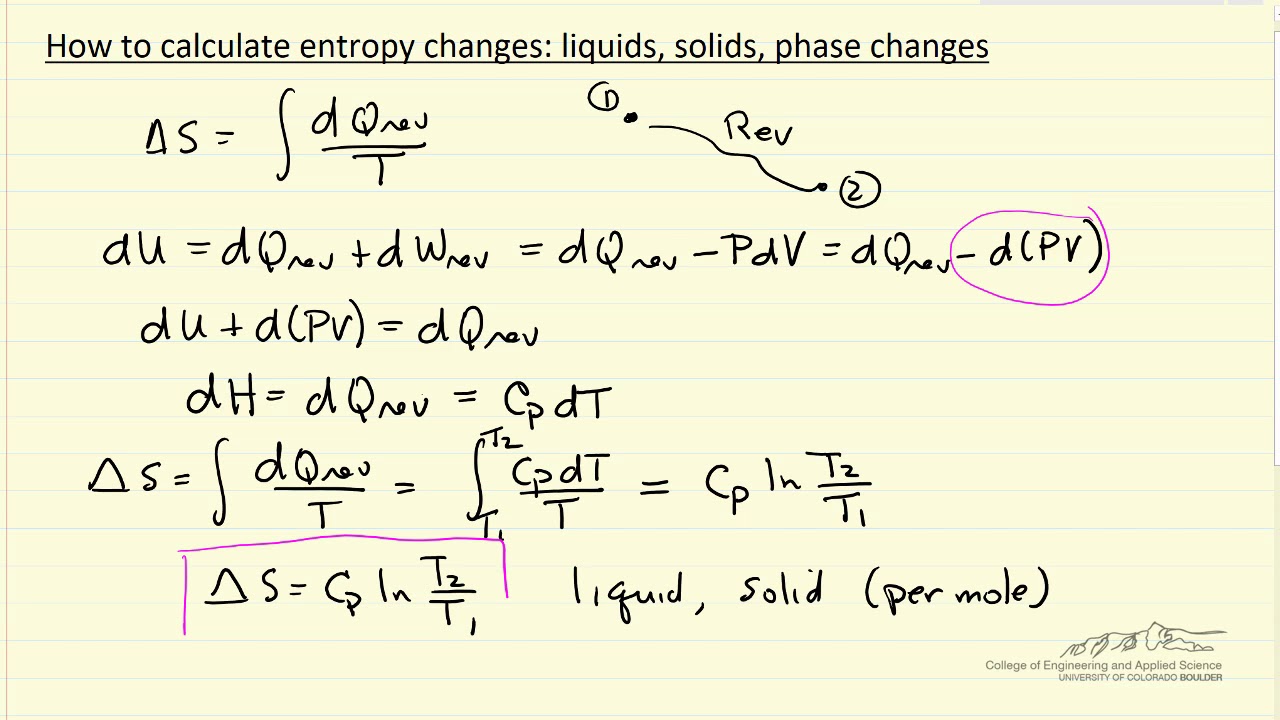

It is also possible to use the free energy of Gibbs (ΔG) and enthalpy (ΔH) to find ΔS. ΣΔS products refers to the sum of the ΔS products,Īnd ΣΔS reactants refers to the sum of the ΔS reactantsģ. Where ΔS rxn refers to the standard entropy values, We estimate entropy change as S Q / T av, where T av is. If the process reaction is known, we can use a table of standard entropy values to find ΔSrxn. The total change in entropy is the sum of the change in entropy of the hot water plus the change in entropy of the cold water. When the process occurs at a constant temperature, the entropy would be:Ģ. Unfortunately, in the information theory, the symbol for entropy isHand the constantkBis absent. n S KXpi log(pi) (7) i1 This expression is called Shannon Entropy or Information Entropy. from publication: A novel methodology for diversity preservation in evolutionary algorithms In this. First is the presence of the symbol log s. Download scientific diagram Shannon Entropy formula. (For a review of logs, see logarithm.) There are several things worth noting about this equation. There are several equations to calculate the entropy:ġ. Later on, people realize that Boltzmann's entropy formula is a special case of the entropyexpression in Shannon's information theory. This is the quantity that he called entropy, and it is represented by H in the following formula: H p 1 log s (1/p 1) + p 2 log s (1/p 2) + + p k log s (1/p k). It contains the system entropy and the entropy of the surroundings. Also, scientists have concluded that the process entropy would increase in a random process. However, we know that for a Carnot engine, Qh Th Qc Tc, so SE 0. The net entropy change of the engine in one cycle of operation is then SE S1 + S2 + S3 + S4 Qh Th Qc Tc. The entropy of the solid (the particles are tightly packed) is more than the gas (particles are free to move). In step 1, the engine absorbs heat Qh at a temperature Th, so its entropy change is S1 Qh / Th. It is the thermodynamic function used to calculate the system's instability and disorder. While reversible adiabatic expansion is isentropic, it is not isentropic to irreversible adiabatic expansion. The process is defined as the quantity of heat generated during the entropy change and is reversibly divided by the absolute temperature.

It is defined by S but represented by S° in the regular state.Entropy is the state function that depends on the system state.The various properties of entropy are as follows: It is a thermodynamic property, such as temperature, pressure and volume, but we cannot visualize it easily.

German physicist Rudolf Clausius was introduced entropy in 1850. The principle of entropy provides a fascinating insight into the course of random change in many daily phenomena.

The amount of entropy is also a measure of the system's molecular randomness or disorder, as the work is generated from ordered molecular motion. Let’s see a concrete example.Entropy measures the system's thermal energy per unit temperature. The basic difference between the two is that entropy (S) denotes the degree of randomness or disorder within a system whereas, enthalpy (H) is the total amount of heat present in a system at a. In this blog post, I will first talk about the concept of entropy in information theory and physics, then I will talk about how to use perplexity to measure the quality of language modeling in natural language processing. If the process is at a constant temperature then, where S is the change in entropy, qrev is the reverse of the heat, and T is the Kelvin. The concept of entropy has been widely used in machine learning and deep learning.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed